Introduction

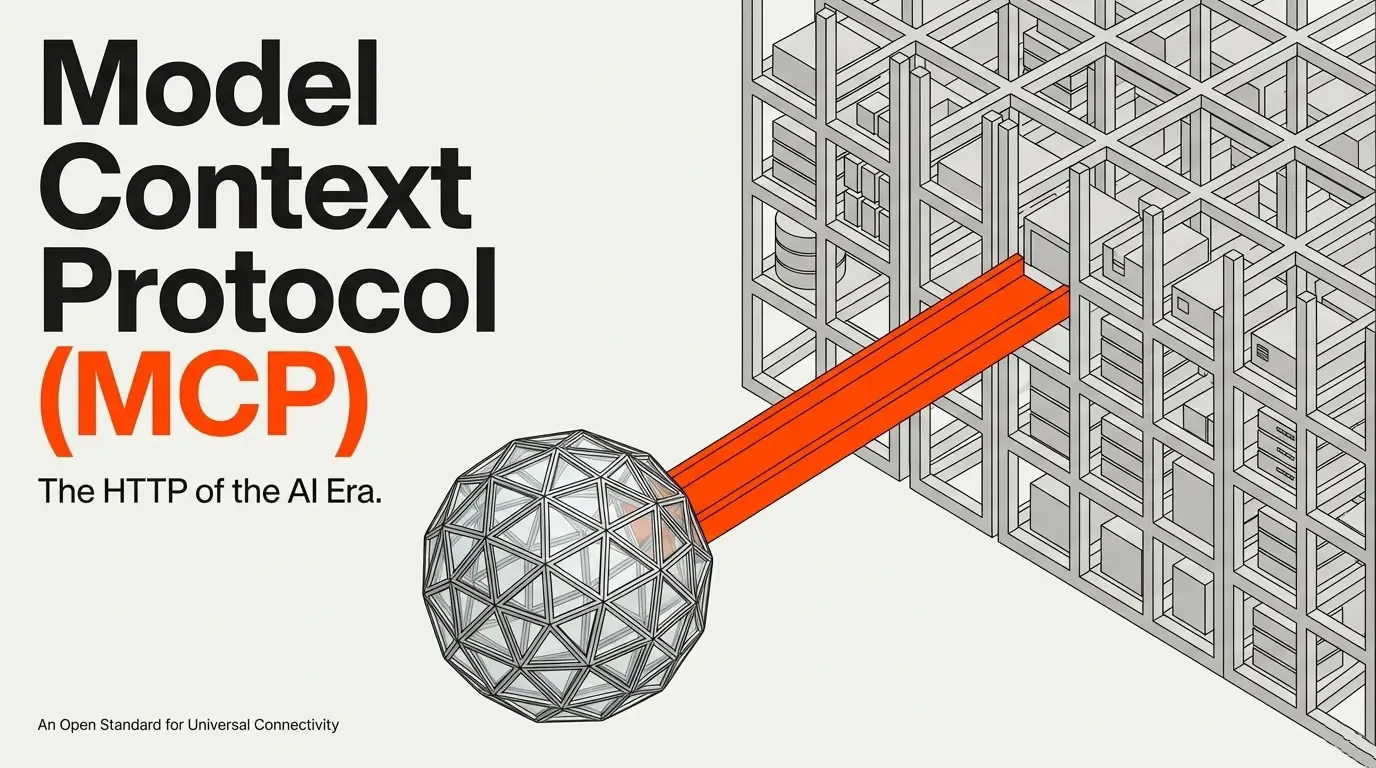

In November 2024, Anthropic introduced the Model Context Protocol (MCP), an open standard designed to fundamentally change how AI applications connect with external systems. As Large Language Models (LLMs) become increasingly integrated into production environments, the challenge of providing them with secure, efficient, and standardized access to external data has become critical.

MCP addresses this challenge by establishing a universal protocol that enables AI systems to seamlessly interact with databases, APIs, file systems, and other resources through a common interface.

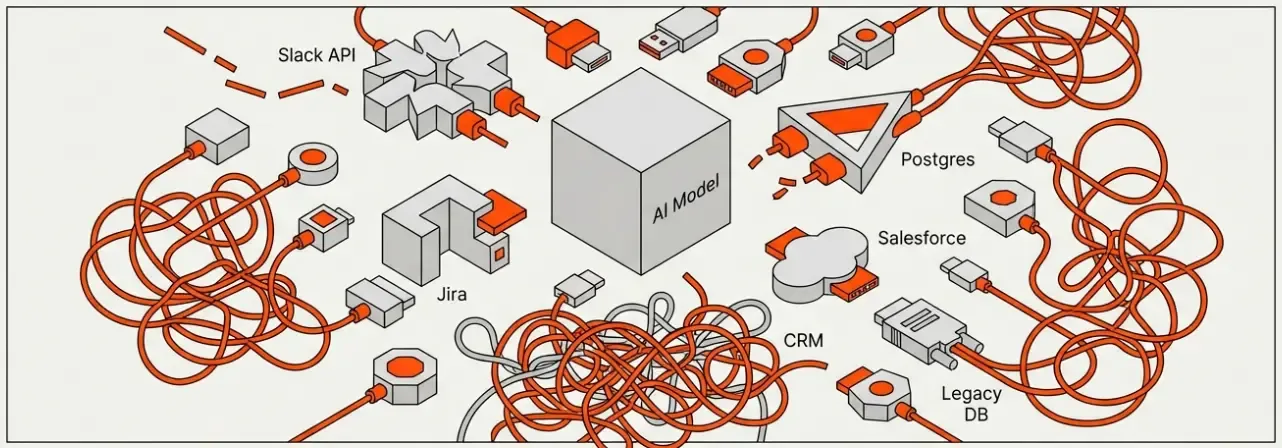

The Problem: Context Fragmentation

Modern AI assistants face a significant architectural challenge: context fragmentation. Each application requires custom integration code to connect with different data sources:

- Custom connectors for each database type.

- Proprietary APIs for file system access.

- Bespoke implementations for cloud services.

- Application-specific tool interfaces.

This fragmentation leads to:

- Development overhead: Building and maintaining multiple integrations.

- Security risks: Inconsistent security implementations across connectors.

- Limited interoperability: Solutions locked into specific ecosystems.

- Reduced scalability: Difficulty adding new data sources.

Before MCP, developers had to choose between:

- Direct integration: Tight coupling between AI models and data sources.

- Custom middleware: Building proprietary abstraction layers.

- Limited functionality: Restricting AI capabilities to pre-integrated services.

What is MCP?

The Model Context Protocol is an open-source, standardized protocol that defines how AI applications (clients) communicate with data sources and tools (servers) to provide context to language models.

Core Design Principles

- Universality: One protocol for all types of external resources.

- Security: Built-in authentication and authorization mechanisms.

- Simplicity: Easy to implement for both clients and servers.

- Extensibility: Support for custom resource types and capabilities.

- Interoperability: Platform and vendor-agnostic design.

Architecture Overview

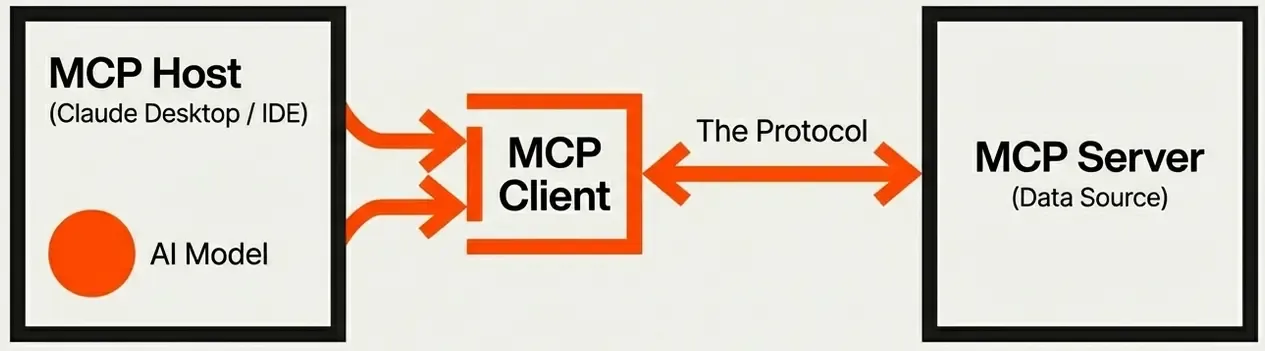

MCP follows a client-server architecture:

Components:

- MCP Host: Application embedding the AI model (e.g., Claude Desktop, IDE).

- MCP Client: Component within the host that initiates connections.

- MCP Server: Service that exposes resources, tools, or prompts to clients.

- Resources: Data sources like files, database records, or API responses.

- Tools: Executable functions the AI can invoke.

- Prompts: Pre-configured prompt templates for specific tasks.

Key Features and Capabilities

1. Resources

Resources are the fundamental unit of context in MCP. They represent any data that an AI might need:

{

"uri": "file:///project/src/main.py",

"mimeType": "text/x-python",

"text": "def hello():\n print('Hello, MCP!')"

}Resource types include:

- File contents.

- Database query results.

- API responses.

- Live system metrics.

- Document snippets.

2. Tools

Tools allow AI models to take actions in the external world:

{

"name": "search_database",

"description": "Search the user database by criteria",

"inputSchema": {

"type": "object",

"properties": {

"query": {"type": "string"},

"limit": {"type": "number"}

}

}

}Common tool patterns:

- Data queries and searches.

- File system operations.

- API calls to external services.

- System command execution.

- Workflow triggers.

3. Prompts

Prompts are reusable templates that help guide AI behavior:

{

"name": "code_review",

"description": "Review code changes for security issues",

"arguments": [

{

"name": "file_path",

"description": "Path to the file to review",

"required": true

}

]

}4. Sampling

MCP supports agent-driven interactions, where the server can request the AI to generate completions. This enables:

- Multi-turn conversations.

- Autonomous workflows.

- Complex decision trees.

- Context-aware responses.

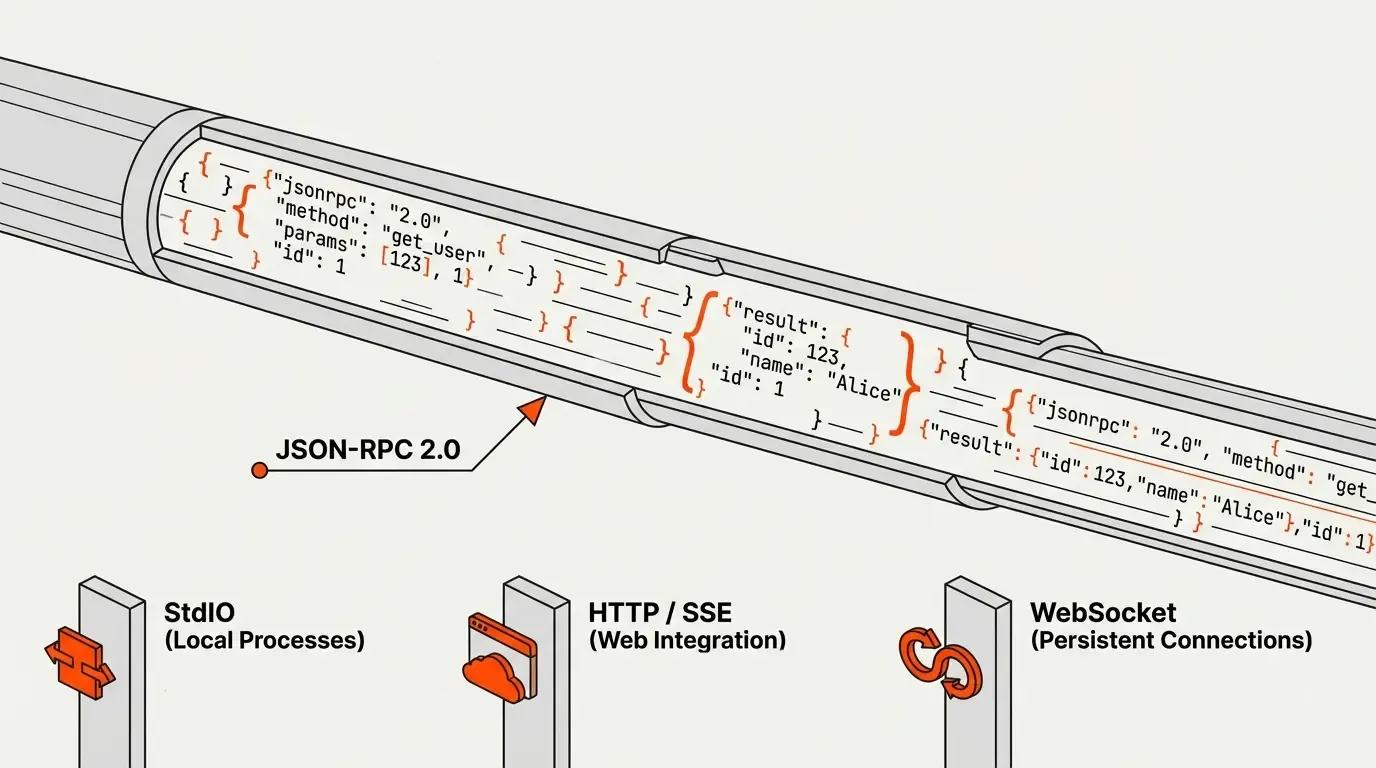

Protocol Communication

MCP uses JSON-RPC 2.0 over various transport layers:

Transport Options

- Standard I/O (stdio): For local processes.

- HTTP with SSE: For web-based integrations.

- WebSocket: For persistent connections.

Message Flow Example

Client Request:

{

"jsonrpc": "2.0",

"id": 1,

"method": "resources/read",

"params": {

"uri": "database://users/table/customers"

}

}Server Response:

{

"jsonrpc": "2.0",

"id": 1,

"result": {

"contents": [

{

"uri": "database://users/table/customers",

"mimeType": "application/json",

"text": "[{\"id\": 1, \"name\": \"Alice\"}]"

}

]

}

}Security Model

MCP implements security at multiple layers:

1. Authentication

- OAuth 2.0 support.

- API key authentication.

- Certificate-based authentication.

2. Authorization

- Granular resource permissions.

- Tool execution policies.

- Capability-based security.

3. Sandboxing

- Resource access restrictions.

- Network isolation options.

- Rate limiting and quotas.

4. Audit Logging

- Complete request/response logging.

- User action tracking.

- Compliance reporting.

Implementation Patterns

Building an MCP Server

Basic Python MCP server structure:

from mcp.server import Server, Resource

from mcp.types import TextContent

class FileSystemServer(Server):

async def list_resources(self):

return [

Resource(

uri="file:///home/user/document.txt",

name="document.txt",

mimeType="text/plain"

)

]

async def read_resource(self, uri: str):

with open(uri.replace("file://", "")) as f:

content = f.read()

return TextContent(

uri=uri,

mimeType="text/plain",

text=content

)Connecting an MCP Client

from mcp.client import Client

async with Client("stdio://filesystem-server") as client:

# List available resources

resources = await client.list_resources()

# Read a specific resource

content = await client.read_resource(resources[0].uri)

# Execute a tool

result = await client.call_tool("search", {"query": "python"})Use Cases and Applications

1. Development Environments

Scenario: IDE integration for AI-assisted coding

MCP enables:

- Read project files and dependencies.

- Execute tests and linters.

- Access version control history.

- Query documentation databases.

2. Enterprise Data Access

Scenario: AI assistant with access to corporate databases

MCP enables:

- Secure database queries.

- CRM system integration.

- Document repository access.

- Business intelligence tool integration.

3. DevOps and Monitoring

Scenario: AI-powered incident response

MCP enables:

- Log aggregation access.

- Metrics and alerting integration.

- Infrastructure provisioning tools.

- Deployment pipeline control.

4. Research and Data Science

Scenario: AI assistant for data analysis

MCP enables:

- Dataset access and querying.

- Statistical tool execution.

- Visualization generation.

- Experiment tracking integration.

Ecosystem and Adoption

Official Implementations

As of late 2024, MCP includes:

- TypeScript SDK: Full-featured client and server implementation.

- Python SDK: Growing library with async support.

- Claude Desktop: First-party MCP host support.

Community Servers

The community has rapidly developed MCP servers for:

- Databases: PostgreSQL, MySQL, MongoDB, Redis.

- Cloud Platforms: AWS, Google Cloud, Azure.

- Development Tools: GitHub, GitLab, Linear.

- File Systems: Local, S3, Google Drive.

- APIs: REST, GraphQL, gRPC bridges.

Integration Partners

Organizations adopting MCP:

- IDE vendors (VS Code extensions).

- Database providers.

- Cloud platforms.

- Enterprise software vendors.

Comparison with Alternatives

Function Calling vs. MCP

Function Calling (OpenAI, Anthropic):

- Model-specific JSON schema.

- Coupled to specific AI provider.

- Limited to predefined functions.

- No resource abstraction.

MCP:

- Universal protocol across models.

- Provider-agnostic.

- Dynamic resource discovery.

- Standardized security model.

LangChain vs. MCP

LangChain:

- Application framework.

- Python/TypeScript specific.

- Custom agent architecture.

- High-level abstractions.

MCP:

- Protocol specification.

- Language-agnostic.

- Standardized communication.

- Lower-level primitive.

Custom APIs vs. MCP

Custom APIs:

- Application-specific.

- Fragmented implementations.

- No standard security model.

- High maintenance overhead.

MCP:

- Universal standard.

- Consistent interface.

- Built-in security patterns.

- Interoperable ecosystem.

Challenges and Limitations

Current Limitations

- Early Stage: Limited production deployments.

- Performance: Protocol overhead for high-frequency operations.

- Complexity: Learning curve for implementation.

- Tooling: Nascent debugging and monitoring tools.

- Standardization: Evolving specifications.

Open Questions

- State management: How to handle long-running operations.

- Versioning: Protocol evolution and backward compatibility.

- Federation: Multi-server coordination patterns.

- Optimization: Caching and performance tuning strategies.

Future Directions

Short-term Evolution

- Enhanced authentication: Multi-factor and federated identity.

- Streaming support: Large resource transfers.

- Batch operations: Efficient multi-resource access.

- Error handling: Standardized retry and fallback patterns.

Long-term Vision

- Universal AI Context Layer: MCP as the standard for all AI-data interactions.

- Marketplace Ecosystem: Certified MCP server marketplace.

- Enterprise Adoption: MCP as corporate standard for AI integration.

- Cross-model Compatibility: Seamless switching between AI providers.

- Advanced Security: Zero-trust architectures and compliance frameworks.

Practical Recommendations

For Developers

- Start experimenting: Build simple MCP servers for your tools.

- Contribute: Join the open-source community.

- Design patterns: Study existing server implementations.

- Security first: Implement proper authentication from the start.

For Organizations

- Evaluate readiness: Assess data sources and use cases.

- Pilot projects: Start with low-risk integrations.

- Security review: Establish MCP governance policies.

- Training: Educate teams on MCP concepts and patterns.

For the Community

- Standardization: Contribute to specification development.

- Documentation: Create tutorials and best practices.

- Tooling: Build debugging and monitoring solutions.

- Advocacy: Promote adoption and interoperability.

Conclusion

The Model Context Protocol represents a pivotal moment in AI infrastructure. By establishing a universal standard for how AI systems interact with external data and tools, MCP addresses one of the most pressing challenges in modern AI applications: bridging the gap between powerful language models and the rich context they need to be truly useful.

While still in its early stages, MCP has the potential to become the HTTP of the AI era — a foundational protocol that enables seamless integration, promotes security, and fosters innovation.

As the ecosystem matures, we can expect:

- Broader adoption across AI platforms.

- Richer tooling and development frameworks.

- Standardized security and compliance patterns.

- A thriving marketplace of MCP-compatible services.

For developers and organizations building AI-powered applications, now is the time to engage with MCP — to shape its evolution, contribute to its ecosystem, and prepare for a future where AI seamlessly integrates with every data source and tool.

References and Resources

- MCP Specification

- Anthropic MCP Announcement

- MCP GitHub Repository

- TypeScript SDK Documentation

- Python SDK Documentation

- Community Servers

About this series: This post is part of an ongoing exploration of modern AI infrastructure and architecture patterns. Future posts will cover practical MCP implementation, advanced security patterns, and real-world case studies.